After completing the TechStart grant in February this year, we used the business plan as the basis for two other grant submissions. We applied for the MTI Seed Grant and Libra Future Fund. The plan was to raise enough money to work on RoboGoby as a full time job for this summer. Unfortunately, we did not secure either of the grants and so had to go with Plan B.

While we did not get any money out of our grant attempts, we learned a lot along the way. We have gained a much better understanding of the grant process, as well as the process of securing funding more broadly. These are both skills that we will be putting to use in the future. We also had to quickly change our summer plans in order to make sure we had an income stream for the summer.

Plan B consists of doing yard work, in order to make money over the summer, and smaller engineering projects that we can complete in one summer. Check out our Facebook page for updates about new projects. Our final blog post will be in the coming weeks and is going to be a post explaining how to follow us on our new website to continue to get info about RoboGoby and related projects.

Here is our advertisement for yard work, please don't hesitate to contact us.

RoboGoby – ROV/AUV Submersible

Project RoboGoby is a design project focused on designing and building a working and marketable UUV. This project is being spearheaded by Limbeck Engineering, a group of four college students from Maine. Please press the HOME button for further information.

6.27.2016

1.04.2016

End of Year Update

This post quickly highlights the advancements we have made in the last couple of months, as well as our upcoming work.

Summer Work

We have finally gotten around to publishing a video showing RoboGoby's current driving capabilities. We'd like to thank the Maurer's for allowing us to use their pool to film RoboGoby!

Summer Work

We have finally gotten around to publishing a video showing RoboGoby's current driving capabilities. We'd like to thank the Maurer's for allowing us to use their pool to film RoboGoby!

Current Status

We recently secured funding from the Maine Technology Institute through their TechStart grant. We've used this money to pay for professional market research. Using the information that we learned through this market research, we will use the next two months to finalize a business plan for the coming year and begin writing grant proposals for future R&D grants. We then hope to use the money from these R&D centered grants will allow us to develop an alpha model of RoboGoby over the Summer of 2016.

As part of our work on a business plan we are reaching out to many of our former contacts that have shown interest in the project, as well as many new individuals that we believe would benefit from, or be interested in our project. If you are interested or know somebody who might be interested please send along our contact information or email us directly at limbeck.engineering@gmail.com.

Labels: Engineering, ROV, AUV

Business,

Sub Prototype

8.25.2015

Wiring Harness

One of the final tasks in building our prototype was wiring. This year we spent a lot more time planning out our wiring than in our first prototype. We decided this was important because tracking down and fixing any faulty connections was very difficult when our wires were so unorganized.

Wiring Plan:

As shown in our post about the watertight compartment, we plan to have five major cables (one in each cord grip) run from electronics to inside the compartment – three entering the from the front and two from the back.

Front

The three cables protruding from the front are organized in the following way:

Rear

The two cables to the back are split so that:

Wiring Plan:

As shown in our post about the watertight compartment, we plan to have five major cables (one in each cord grip) run from electronics to inside the compartment – three entering the from the front and two from the back.

Front

The three cables protruding from the front are organized in the following way:

- One has seven wires and will be for the two small thrusters in the front have.

- One has eight wires, two for each of four LEDs.

- And the final cable has eighteen wires that will connect to the camera, servo motor, IMU, depth sensor, and two LIDARs.

Rear

The two cables to the back are split so that:

- One has ten wires which will run to both small thrusters and the large thruster in the back half.

- The second has three wires which run out the top of the sub to act as our tether, as in this post.

Wiring the Loom:

Because we spent more time planning the actual process of wiring the loom didn't take too long. Inspired by the power supply we bought, we came up with a good way of organizing the wires.

We stripped the large cables far enough back that we had all the free wire we needed to route and solder our electronics in place.

We then grouped wires based on the final destination (e.g. three for each small thruster or four to the USB cable) and put them in sleeving.

Then to keep the sleeving from fraying we zip-tied each end and covered it with heat shrink.

This process was the same for all of our components. We also added two more organizational ideas to make the wiring easy to follow.

First, we used coordinated the heat shrink to show the function of each set of wires. For example we made the horizontal thrusters with blue heat shrink and the vertical thrusters with yellow heat shrink (as pictured above).

Second, we got wire markers (numbered/lettered stickers) to label similar looking parts. For example, we labeled each of the motor controllers and used labels to indicate which side of connectors went to ground.

We covered all of our solder joints – on top of all the sleeving – with similarly colored heat shrink, making sure to put liquid electrical tape on each one first to help with water proofing.

The last precaution that we took against any leakage was epoxying the ends of the cable. We did this because it is possible for water to enter the cables and work its way into the watertight compartment inside the casing as it can bypass the cord grips.

The zip tie in the picture ensures that the epoxy does not drip too far into the cable compromising the effectiveness of the plug. We cut a small slit in each of the cables so that we could fill it with epoxy,

then used a zip-tie to keep the epoxy tightly packed in the top 1/4" of the cable.

Below we have two pictures of the sub. One before wiring the loom and one of after wiring the loom.

The last precaution that we took against any leakage was epoxying the ends of the cable. We did this because it is possible for water to enter the cables and work its way into the watertight compartment inside the casing as it can bypass the cord grips.

|

| Cable with Epoxy |

then used a zip-tie to keep the epoxy tightly packed in the top 1/4" of the cable.

Below we have two pictures of the sub. One before wiring the loom and one of after wiring the loom.

|

| Before Wiring the Loom |

|

| After Wiring the Loom |

8.24.2015

Front Assembly – Camera and Sensor Mounts

After designing our front camera and sensor mounts in SolidWorks, we moved onto prototyping and assembling the pieces. We designed all of the mounts to be 3D printed using the same Makerbot Replicator we used to print the thruster curtains and rear cone.

Assembly:

Camer Mount

Printing:

Our first couple of prints were test prints. We wanted to see if the printer could handle the complex camera mount and test the orientation of each of the side mounts. After a few re-designs we were able to print the final camera mount, but the side mounts continued to gives us problems. Due to their large footprint, the filament continued to peel off of the print plate.

After more than four unsuccessful attempts at printing the front camera mount we switched over to a different 3D printer. We used a Dremel 3D Printer with PLA. The printed turned out well, although getting the software to work was another story (lets just say it needed some persuasion).

After more than four unsuccessful attempts at printing the front camera mount we switched over to a different 3D printer. We used a Dremel 3D Printer with PLA. The printed turned out well, although getting the software to work was another story (lets just say it needed some persuasion).

|

| Right Cover |

|

| Left Cover |

|

| Cover Pair |

There are a few different aspects to the assembly of the Camera Mount. The assembly consisted of adding threaded inserts, painting the printed plastic, fitting the polycarbonate shield, and finally epoxy the camera and mounting it in the front.

Threaded Inserts:

Similar to our posts about the through hole thrusters and the large rear thruster we used threaded inserts to mount many of our front pieces. The entire front assembly consisted of ten threaded inserts. Two M3 inserts were used to mount the servo on the right cover. Two M4 inserts were used in the camera cast to hold the camera cover on the camera. Two more M4s were used for the sensor mount. The last four M6 inserts on the left and right side coverts to hold them on the aluminum frame. To get a better understanding of the location of the inserts refer to the images at the bottom of this post. The holes colored red are where the inserts will be used. Below is a CAD drawing of the right cover:

Epoxying the Camera:

Similar to last year we decided to epoxy our camera. We epoxied the camera in order to waterproof it without having to design a watertight space in the front of the submersible. To epoxy the camera we mounted it to the camera cover using M2 bolts.

We then covered it in two layers of epoxy. The first layer was black heat-sink epoxy. We used this to ensure that the electronics on the camera board did not overheat. The second layer was optically clear epoxy. We used this to put some finishing touches on our first coat and to cover the built in LEDs on the board.

Mounting the Camera:

In order to mount the camera after it had dried we needed to do a few small things. First we mounted the servo to the right side cover using the M3 threaded inserts. We then used the servo horn that comes with the the servo and screwed it to the camera mount. Next we screwed the LED packages onto the camera cover and mounted all of that to the camera cover. We added a 1/4" Delrin rod to support the side of the camera opposite the servo. We did this by screwing it into the camera mount closest to the servo and sliding it through the entire mount.

We also mounted the LiDAR to the top half of the camera mount. We use this for measuring distances in order to detect any objects in front of the submersible.

|

| Front Right w/ Threaded Inserts |

Epoxying the Camera:

Similar to last year we decided to epoxy our camera. We epoxied the camera in order to waterproof it without having to design a watertight space in the front of the submersible. To epoxy the camera we mounted it to the camera cover using M2 bolts.

|

| DUO w/o Epoxy |

We then covered it in two layers of epoxy. The first layer was black heat-sink epoxy. We used this to ensure that the electronics on the camera board did not overheat. The second layer was optically clear epoxy. We used this to put some finishing touches on our first coat and to cover the built in LEDs on the board.

|

| Epoxying Camera |

Mounting the Camera:

In order to mount the camera after it had dried we needed to do a few small things. First we mounted the servo to the right side cover using the M3 threaded inserts. We then used the servo horn that comes with the the servo and screwed it to the camera mount. Next we screwed the LED packages onto the camera cover and mounted all of that to the camera cover. We added a 1/4" Delrin rod to support the side of the camera opposite the servo. We did this by screwing it into the camera mount closest to the servo and sliding it through the entire mount.

|

| Servo w/ Horn and Delrin Shaft |

We also mounted the LiDAR to the top half of the camera mount. We use this for measuring distances in order to detect any objects in front of the submersible.

|

| Mounted LiDAR |

Sensor Mount

The sensor mount was a little less involved than the camera mount. After printing the mount (pictured below) we epoxied the LiDARs PCB and tested it.

|

| Empty Sensor Mount |

We then wired the MS5803 Pressure sensor and the Razor IMU. After finishing the with the wiring we used heat-sink epoxy to mount the electronics in the mount. We also added M4 threaded inserts to the mount in order to mount the LiDAR. Below is a picture of the finished sensor pack. You can see our down facing LiDAR as well as where the IMU and pressure sensor are epoxied in place.

|

| Finished Sensor Pack |

Assembly

After finishing both the sensor mouth and the camera mount we assembled it on the front of the sub. To assemble the front we first mounted the camera/servo combination to the right side cover. In the picture you can also see our LED units we will use for lighting.

|

| Camera Mounted on Right Cover |

Next we bent a piece of polycarbonate to fit in the slot in the printed plastic and pressed the left cover on. we then slid it inside our frame. The finished assembly is pictured below:

|

| Finished Front Assembly |

Labels: Engineering, ROV, AUV

Prototype,

Sub Prototype

8.22.2015

Thank-Yous

We have a few people that we want to thank for helping us with RoboGoby 2.0 throughout this past summer.

Darin Marion - Porter's Precision Machining

Darin machined the parts for our watertight compartment. Beyond machining the parts he was helpful in making a few design changes to our watertight pieces.

Matt Cyr - Cumberland Ironworks

Cumberland Ironworks welded the watertight pieces on the middle section of our submersible. We especially want to thank them for being very flexible as we had to reschedule a few times.

Kris Jennings - Downeast Woodworks

Kris was the reason that we have so many nicely routed parts. He let us come into his shop and helped us cut out many of our parts using his router.

David Smail - Freeport High School

David Smail was very helpful in getting our parts 3D printed. He is in charge of the Freeport High School 3D printers and was able to help us start prints a few days a week throughout the summer, allowing us to go through a few iterations of each part.

Jon Amory & Alan Lucas - Baxter Academy

Jon Amory has been an adviser throughout the project, and we owe him a lot. Alan Lucas was able to help us get a part 3D printed when we needed it on very short notice.

Geoff Nelson - Royal River Boat Repair

Geoff Nelson was helpful in a few ways. Most notably he helped with some aspects of the mechanical and electrical design and allowed us to use many of his tools at Royal River Boat Repair.

The Jennings Family

We need to thank the Jennings for letting us utilize their house and pond for testing and our upcoming launch.

The Libsack Family

The Libsack's have been very kind to let us use their basement as the base for our work throughout the summer. They have also fed us on numerous occasions as we worked late hours.

Yardwork

At the beginning of the summer we did over 100 (collective) hours of yardwork to fundraise for our project. We want to thank the following people for hiring us for this work:

Bob Moore

Liza Bakewell

Michaud

Maddie Vertenten

Kim LaMarre

Margaret Hertz

Kathy Biberstein

Jennifer Libsack

Thanks to their support we were able to raise almost $1500.

Darin Marion - Porter's Precision Machining

Darin machined the parts for our watertight compartment. Beyond machining the parts he was helpful in making a few design changes to our watertight pieces.

Matt Cyr - Cumberland Ironworks

Cumberland Ironworks welded the watertight pieces on the middle section of our submersible. We especially want to thank them for being very flexible as we had to reschedule a few times.

Kris Jennings - Downeast Woodworks

Kris was the reason that we have so many nicely routed parts. He let us come into his shop and helped us cut out many of our parts using his router.

David Smail - Freeport High School

David Smail was very helpful in getting our parts 3D printed. He is in charge of the Freeport High School 3D printers and was able to help us start prints a few days a week throughout the summer, allowing us to go through a few iterations of each part.

Jon Amory & Alan Lucas - Baxter Academy

Jon Amory has been an adviser throughout the project, and we owe him a lot. Alan Lucas was able to help us get a part 3D printed when we needed it on very short notice.

Geoff Nelson - Royal River Boat Repair

Geoff Nelson was helpful in a few ways. Most notably he helped with some aspects of the mechanical and electrical design and allowed us to use many of his tools at Royal River Boat Repair.

The Jennings Family

We need to thank the Jennings for letting us utilize their house and pond for testing and our upcoming launch.

The Libsack Family

The Libsack's have been very kind to let us use their basement as the base for our work throughout the summer. They have also fed us on numerous occasions as we worked late hours.

Yardwork

At the beginning of the summer we did over 100 (collective) hours of yardwork to fundraise for our project. We want to thank the following people for hiring us for this work:

Bob Moore

Liza Bakewell

Michaud

Maddie Vertenten

Kim LaMarre

Margaret Hertz

Kathy Biberstein

Jennifer Libsack

Thanks to their support we were able to raise almost $1500.

8.21.2015

Power Distribution and Tether

Power Supply

The power supply we're using for RoboGoby is a 12v 500W PC power supply. Specifically it is the Shuttle 500W power supply. This power supply is perfect for RoboGoby because it's maximum current draw matches up with the maximum current draw on our thrusters before cavitation.

The power supply has 3.3v, 5v, 12v, and -12v power rails. We only wanted the 12v rails on the power supply so we cleaned it up. We connected the green wire to ground, allowing the power supply to actually power up when plugged in. To organize the 12v wires, we removed the cover of the power supply (pictured below) and then cut all of the wires except a large group of yellow wires (12v rail) and black wires (ground). We used zip ties and heat shrink along with Ultra Plug Dean Connectors to organize the wires into two positive and negative plugs.

|

| Open Power Supply (not finished) |

|

| Finished 12v Supply |

Tether Cable

The tether is actually made up of two different wires. The first is a 100ft, 3 wire, 16AWG extension cable. The second is a 3ft, 3 wire, 14AWG power cable. Because we're using an AC power supply we decided to also use extension cable for our prototype. This allows us to plug in RoboGoby anywhere with a AC power. This makes testing easier as we don't have to carry around a battery pack and worry about DC-AC power inversion.

About 1 foot above the submersible the extension cable turns into the slightly larger 14AWG 3 wire tether. We went with 14AWG as this cable is so short that the extra size and weight is not a big deal and in the future we may want the entire tether to be 14AWG so larger amp draws would be possible.

|

| Extension Cable |

Cord Grip

We used a cord grip very similar to the ones found on either end of our watertight compartment, which are described here. The only difference is that there is a short (~3") spring to provide support for the wire entering the cord grip.

|

| Cord Grip w/Support |

The cord grip makes sure that the tether is securely attached to the submersible – preventing any slippage – in a way that it both strong and easily removable in case we need to make changes.

Waterproof Connector

The waterproof connector is the one we described in this post. We decided to have a connector outside the submersible so that we could easily detach the sub from the tether, allowing for easier transport and more customizability.

The waterproof connector is the one we described in this post. We decided to have a connector outside the submersible so that we could easily detach the sub from the tether, allowing for easier transport and more customizability.

|

| Waterproof Connector |

Though we were originally worried about how much force the connector could take, we have found that it holds up very well to the amount of tension it will experience.

|

| Tether and Connector |

Labels: Engineering, ROV, AUV

Electrical,

Prototype

8.18.2015

Rear Thruster v2.0 – Building

Before reading this you should read about the CAD design for the rear thruster. As a brief into, the rear thruster body consist of 4 different parts: the nozzle, the fins to connect the nozzle, the cone, and the motor mount.

We then epoxied the fins into the slots in the cone and nozzle to ensure a strong and lasting connection between the two. We also used the same epoxy mixed with acetone to create an epoxy paint. We then used this to paint the cone, getting ride of the shiny PLA look and giving it a smoother look.

Next we used epoxy to mount the nozzle onto the rear cone. After that we mounted the motor mount/motor to the cone using 12mm M4 bolts. And the thruster is complete (finally)! Below are a few profile shots and pictures of the finished thruster.

Cone

As described in this post the new rear cone design is larger than our previous cone. This caused a few issues in its manufacturing. We first tried to cut the cone out of a large 5"x6"x6" piece of polyethylene on a CNC machine that has a rotary axis. Unfortunately the piece weighed a bit to much and was very unwieldy so we had to try something else. Before we move on here is a picture from when we bored out the polyethylene bloc with a hole for the motor to sit in...it makes a flower!

We decided to use the 3D printer instead (older version of MakerBot Replicator); surprisingly enough, it worked extremely well. You can see an image of the print with threaded inserts below, as well as a image of the cone inside the body to show how nicely it fits.

|

| Polyethylene "flower" |

We decided to use the 3D printer instead (older version of MakerBot Replicator); surprisingly enough, it worked extremely well. You can see an image of the print with threaded inserts below, as well as a image of the cone inside the body to show how nicely it fits.

|

| Cone w/ Inserts onto of Aluminum Body |

|

| Cone in Aluminum Body |

Quick tip

We are printing this piece with the large flat side facing down. This is because it leaves only a little bit of overhang, which is not a problem for a printer with decent scaffolding. If your printer does not have that ability then, according to one of our advisers, adding a chamfer to the overhang should allow you to safely print the piece.Motor Mount

The motor mount for the rear thruster was cut out of Delrin, the same material we used to make our small thruster mounts. This is a major improvement from last year as it gives us much more freedom while mounting the motor. Last year we used the small cross mount that comes with the Turnigy SK3 motor which we didn't like very much because it barely sticks out beyond the edge of the motor – meaning we had to have a very small compartment for the motor. This year we are able to have a larger compartment for the motor, which we wanted for cooling and flow purposes, as well as a much more secure connection to the rear cone.

The mount itself is a pretty simple piece. It has four holes near the middle for attaching the motor and four holes around the edge for attaching the mount to the cone. The spokes are as large as we felt comfortable making them, while allowing for water flow but keeping enough strength to hold the motor in place.

We also used the Delrin plastic to make the fins that attached the cone and the nozzle. There are three fins, two of which are the same while the third is slightly different – the different sizes are needed because of the asymmetry of three fins in a four sided cone.

The mount itself is a pretty simple piece. It has four holes near the middle for attaching the motor and four holes around the edge for attaching the mount to the cone. The spokes are as large as we felt comfortable making them, while allowing for water flow but keeping enough strength to hold the motor in place.

|

| Motor Mount |

|

| Motor on Mount |

For information on the motor, shaft, and propellor that will be mounted to this check out our post on preparing the motors.

Nozzle

We kept the nozzle that was made last year in this year's prototype. We decided to do this because it is a hard part to manufacture, last years version came out nicely, and is still in good condition. It was printed in two parts and then melted/epoxied together and, finally, painted red. |

| Nozzle |

Fins

Assembly

There were a few different tasks required to assemble the rear thruster. First we had to press the threaded inserts into the cone. We used threaded inserts to avoid cross threading and stripping our threads on the relatively weak PLA.

To press the threaded inserts into the printed PLA we heated them with a heat gun and easily pressed them into place.

To press the threaded inserts into the printed PLA we heated them with a heat gun and easily pressed them into place.

We then epoxied the fins into the slots in the cone and nozzle to ensure a strong and lasting connection between the two. We also used the same epoxy mixed with acetone to create an epoxy paint. We then used this to paint the cone, getting ride of the shiny PLA look and giving it a smoother look.

|

| Painting Cone w/ Epoxy |

|

| Painted Cone |

Next we used epoxy to mount the nozzle onto the rear cone. After that we mounted the motor mount/motor to the cone using 12mm M4 bolts. And the thruster is complete (finally)! Below are a few profile shots and pictures of the finished thruster.

|

| Rear Thruster Profile |

Labels: Engineering, ROV, AUV

Mechanical,

Prototype,

Sub Prototype

8.17.2015

Lighting – Building the LED Mounts

After working on the design for our LEDs we moved onto constructing the mounts. The LED units were fast and easy to cut out once we had access to the Laguna CNC Router we mentioned in this post.

After cutting out the aluminum we used a hand drill to make two small holes in the back of each of the LED pockets. These holes are used to both route and organize some of our wire.

We then soldered the wires onto the LEDs before mounting them to the aluminum heat-sinks. The first unit we put together we saved the soldering for last. Our heat-sink worked a little too well and made it difficult to heat up the LED enough to solder on the wires. We learned from our mistake though and the second unit we soldered much quicker and cleaner.

Assembly

After preparation we used heat-sink epoxy to attach the back of the start PCBs to the back of the aluminum pockets. After waiting 3 days for the epoxy to fully cure we used a clear epoxy to cover the rest of the PCB and the tops of the wires, which protrude slightly from the pocketed section of our aluminum heat-sink. We made sure to keep the LED lenses clean and to have our epoxy dry flat. Below is an image of the clear epoxy drying over the LEDs. We mounted them in the vice to keep a level surface. If you look close enough you might also be able to see some black – that is where the heat-sink epoxy squeezed up through the star PCB when we epoxied it the first time.

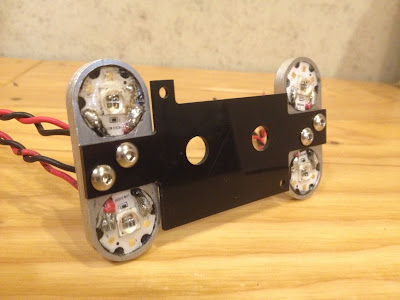

After epoxying the LEDs we used a 4M tap to tap the holes in the middle of the unit. We then mounted the units to the front plate of our camera mount (pictured in green at the end of our camera CAD post).

Preparation

We used the same LEDs for these units as we discussed in this post. Just as a refresher, these LEDs are 10W 850nm LEDs. The diodes themselves are 7mmx7mm, but we bought the LED mounted to a 20mm diameter start PCB. This PCB makes it easier for us to mount and heat-sink the LED to our custom LED unit.

|

| LED ENGIN 850nm on Start PCB (Stock Photo) |

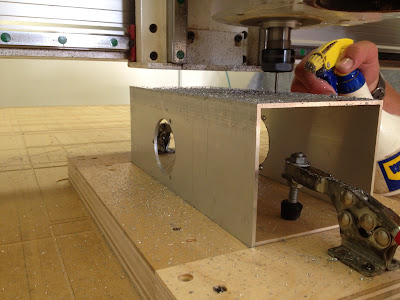

To cut out the LED mounts/heat-sinks we used an 1/8" end mill with the Laguna. While cutting the aluminum we had to make sure our feed rate was slow enough so the bit didn't break and that the bit was cooled to ensure clean cutting. For the feed rate we found that 5 inch/minute works the best. We also needed to continually cool the end mill to keep it cutting well. The combination of the feed rate an the WD40 we used for cooling, small bits of a aluminum "sand-castled" around the path of the end mill. Below is a picture of this as well as a short clip of the router cutting our an aluminum heat-sink.

|

| Cut Aluminum w/ Debris |

After cutting out the aluminum we used a hand drill to make two small holes in the back of each of the LED pockets. These holes are used to both route and organize some of our wire.

|

| Back of LED |

We then soldered the wires onto the LEDs before mounting them to the aluminum heat-sinks. The first unit we put together we saved the soldering for last. Our heat-sink worked a little too well and made it difficult to heat up the LED enough to solder on the wires. We learned from our mistake though and the second unit we soldered much quicker and cleaner.

Assembly

After preparation we used heat-sink epoxy to attach the back of the start PCBs to the back of the aluminum pockets. After waiting 3 days for the epoxy to fully cure we used a clear epoxy to cover the rest of the PCB and the tops of the wires, which protrude slightly from the pocketed section of our aluminum heat-sink. We made sure to keep the LED lenses clean and to have our epoxy dry flat. Below is an image of the clear epoxy drying over the LEDs. We mounted them in the vice to keep a level surface. If you look close enough you might also be able to see some black – that is where the heat-sink epoxy squeezed up through the star PCB when we epoxied it the first time.

|

| LED Unit Epoxy Drying |

After epoxying the LEDs we used a 4M tap to tap the holes in the middle of the unit. We then mounted the units to the front plate of our camera mount (pictured in green at the end of our camera CAD post).

|

| LEDs Mounted to Camera Cover |

Labels: Engineering, ROV, AUV

Electrical,

Visuals

8.13.2015

Cutting the Aluminum Frame

Once we had all of the thrusters finished we needed to cut out aluminum body. Using the 5"x5"x12" aluminum frames and CNC router we were able to cut all of the necessary holes on the frame of RoboGoby.

To cut the aluminum we used a Laguna CNC router. To ensure our aluminum was cut using the correct zero points we did two things. First, we created a pocketed jig that snuggly fit the aluminum frame. The jig was comprised of five wood pieces (four sides and a bottom) and two planar clamps. We framed the aluminum around the center of the bottom board and then used the planar clamps to hold the aluminum in place. The second thing we did to ensure our zero point was routing out a large section of the CNC table. We cut a pocket that held the jig at the zero point we defined for it. We then screwed the jig in place and used the clamps to switch out the aluminum pieces. Below is a picture of the aluminum frame mounted in the jig which was then screwed to the table.

Due to the symmetry of our design, the opposing sides of the aluminum had the same cuts for the thrusters and for connecting the different sections. Each side of the submersible had at least four holes for mounting the thruster. The large holes fits our thruster grills and the three small holes are for M4 bolts. On top of these four holes, two of the four sides have four more holes (for a total of eight holes). These holes are for M6 bolts and will be used to attach either the front assembly, rear thruster, or a different aluminum section.

After cutting out the aluminum we used a knife and de-burring tool to get rid of any sharp edges on the aluminum.

If you have been watching the blog closely you will have realized that a couple holes seem to be missing – those for the downward facing LIDAR and the tether cord grip. We decided to wait on cutting these out until we're sure about the design. At the time we cut out the aluminum frame we were unsure of both.

After we had cut out the aluminum frame we decided to decided to cut the cord-grip hole using a drill press. We were able to get a relatively precise hole without the hassle (and time sink) of re-zeroing a CNC machine. We also purchased a 1/2"-14 NPT thread and thread the hole for the cord grip.

The final product looks quite nice and was manufactured using only 2D vector drawings, a CNC machine, and a few hours.

To cut the aluminum we used a Laguna CNC router. To ensure our aluminum was cut using the correct zero points we did two things. First, we created a pocketed jig that snuggly fit the aluminum frame. The jig was comprised of five wood pieces (four sides and a bottom) and two planar clamps. We framed the aluminum around the center of the bottom board and then used the planar clamps to hold the aluminum in place. The second thing we did to ensure our zero point was routing out a large section of the CNC table. We cut a pocket that held the jig at the zero point we defined for it. We then screwed the jig in place and used the clamps to switch out the aluminum pieces. Below is a picture of the aluminum frame mounted in the jig which was then screwed to the table.

|

| Cutting Aluminum w/ Jig |

Due to the symmetry of our design, the opposing sides of the aluminum had the same cuts for the thrusters and for connecting the different sections. Each side of the submersible had at least four holes for mounting the thruster. The large holes fits our thruster grills and the three small holes are for M4 bolts. On top of these four holes, two of the four sides have four more holes (for a total of eight holes). These holes are for M6 bolts and will be used to attach either the front assembly, rear thruster, or a different aluminum section.

|

| Four Holes |

|

| Eight Holes |

After cutting out the aluminum we used a knife and de-burring tool to get rid of any sharp edges on the aluminum.

|

| De-Burring |

If you have been watching the blog closely you will have realized that a couple holes seem to be missing – those for the downward facing LIDAR and the tether cord grip. We decided to wait on cutting these out until we're sure about the design. At the time we cut out the aluminum frame we were unsure of both.

After we had cut out the aluminum frame we decided to decided to cut the cord-grip hole using a drill press. We were able to get a relatively precise hole without the hassle (and time sink) of re-zeroing a CNC machine. We also purchased a 1/2"-14 NPT thread and thread the hole for the cord grip.

|

| Hole for Tether |

The final product looks quite nice and was manufactured using only 2D vector drawings, a CNC machine, and a few hours.

|

| Isometric View of Rear Frame |

Labels: Engineering, ROV, AUV

Mechanical,

Prototype,

Sub Prototype

8.10.2015

Watertight Compartment – Construction

After designing our watertight compartment we deferred most of the construction to professional machinists and welders. Our previous waterproof compartment post shows the three parts we need machined and also gives an idea of what the final product will look like. This post will give you an idea of how the parts look and fit together in real life.

Machined Parts

As mentioned above, we had three parts machined for the waterproof section. We can't tell you much about how they were made – only that they were cut out with a CNC Milling Machine. Darin Marion from Porter's Precision Machining made the parts for us. Below are pictures of each of the parts (reference this post for a cool "Idea to Finished Product" comparison!): |

| Plug Housing |

|

| Plug |

|

| Back End |

Welding

After making the inserts for our aluminum frame we needed to have the parts welded in place. The plug housing and back end were welded to a 12inch section of our extruded aluminum body. Again, we had the welding done professionally. We used Cumberland Ironworks (in Pownal, Maine) who welded our parts within one day. Below is a picture of the welded midsection. |

| Welded Compartment |

Assembly

The last thing we had to do was add all of our accessories. and assemble the compartment. We threaded on each of our cord grips and mounted the Presta Valve using Loctite. We also bent some chain to fit over two M6 bolts we designed threaded radially into the plug. These bolts and the chain give us a good handle for applying equal pressure to the entire plug. This makes it much easier for one person to pull the plug out on the fly. The pictures below show mounted cord grips, the finished plug, and an image of our plug/wire setup. |

| Cord Grips in Back End |

|

| Finished Plug |

|

| Plug w/ Wires and Chain |

Labels: Engineering, ROV, AUV

Mechanical,

Prototype,

Sub Prototype

Subscribe to:

Posts (Atom)